Simple Airports Analysis - Malaysia

Contents

- Introduction

- Technology Used

- Requirements

- Data Source

- Using this project

- Step 1. Initial setup

- Step 2. Running the workflow

- Alternatively, to run tasks manually (i.e. without using Luigi)

- Optionally, to replicate Luigi’s web visualisation

- Step 3. Analysis on Metabase (Optional)

- Step 4. Viewing dbt documentation (Optional)

- Step 5. Done

- Future Features and Improvements

- Author

Introduction

In this project, we want to run simple analysis on airport and arrival data.

We want to know:

- How many airports are there in Malaysia?

- What is the distance between the airports in Malaysia?

- How many flights are going to land in Malaysian airports the next day from the point of query?

- Which airport is most congested base on the information gathered in question 3 above?

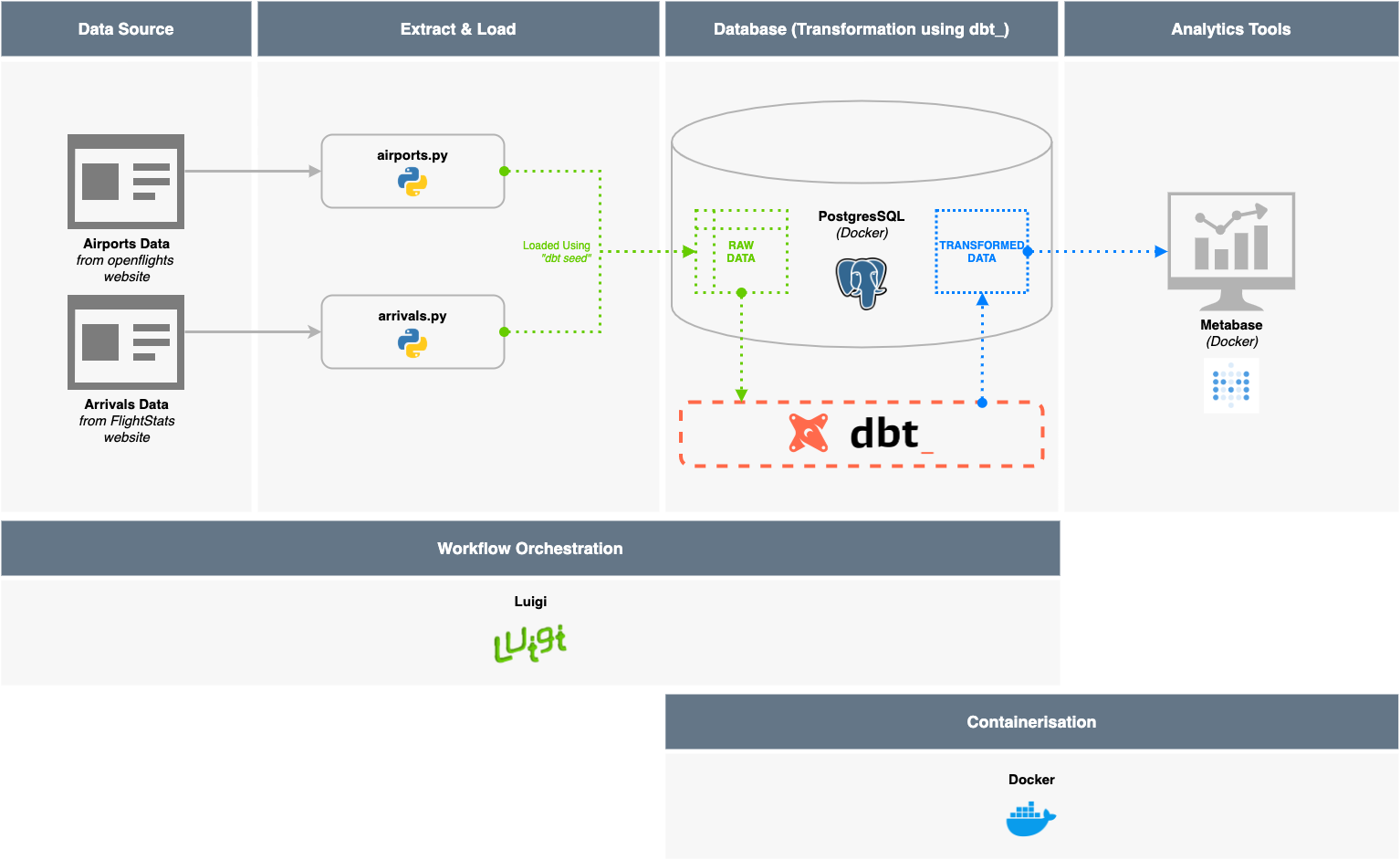

To answer these questions, we built an end-to-end framework with ETL pipelines, a database, and an analytics platform as seen in the Project Framework diagram below. (Admittedly a little over-engineered but, hey! Why not…? :trollface:)

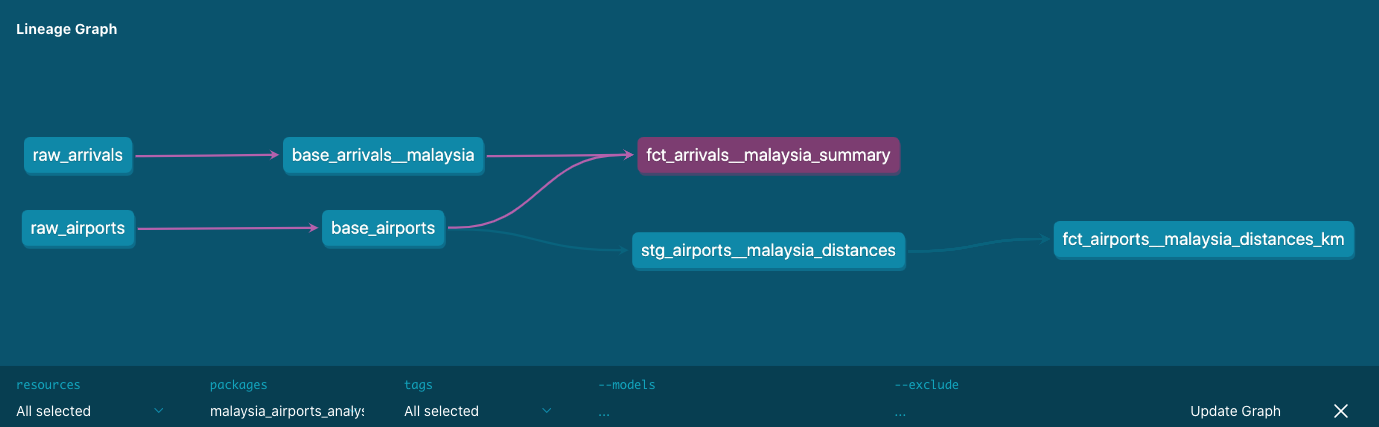

The relationship between the tables used to answer the questions above can be visualised using the Table Lineage.

We also hosted a version of the dbt documentation on GitHub which would contain the table descriptions, data dictionaries, and the SQL queries—both raw and compiled—used to generate the tables.

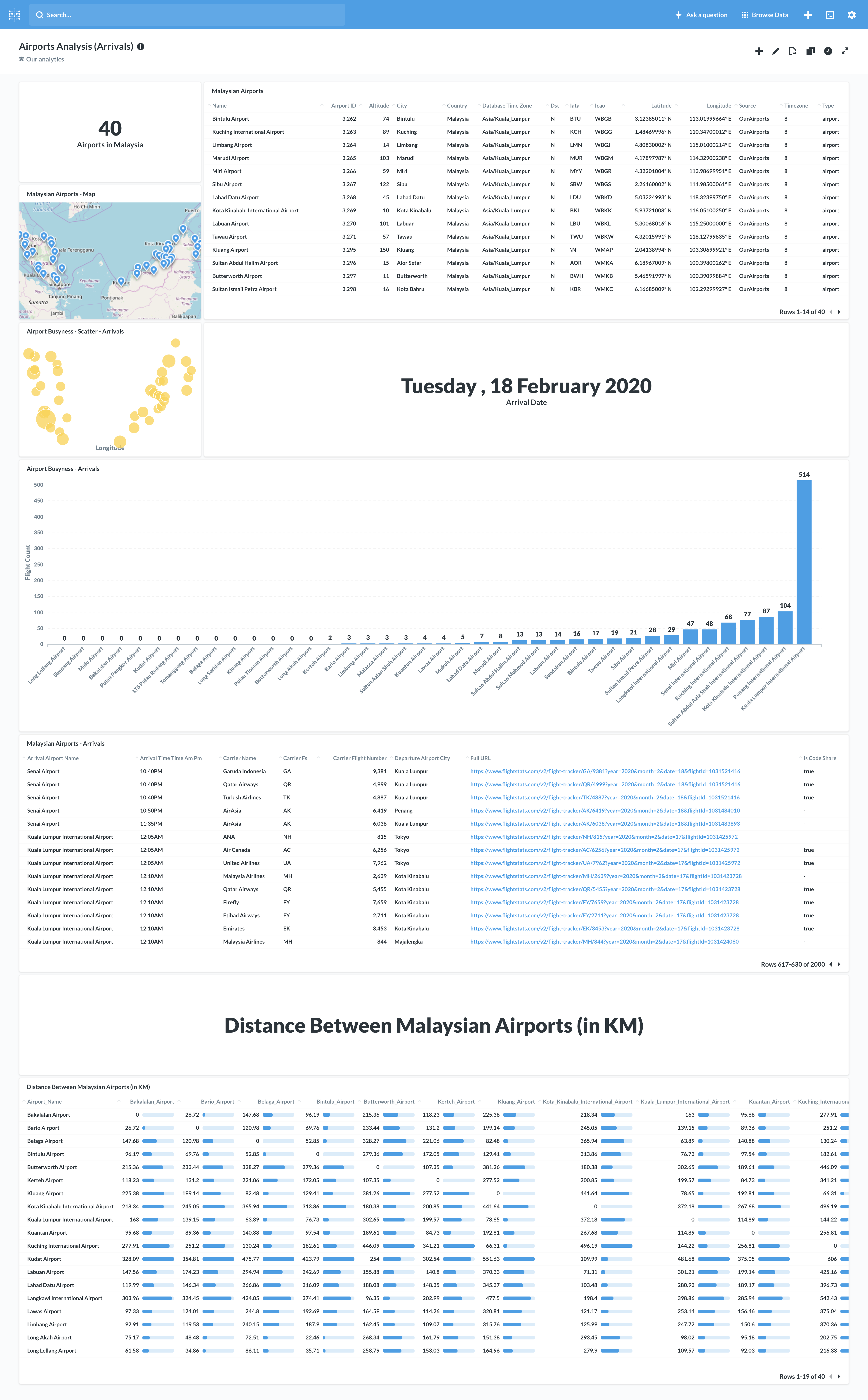

Finally, to visualise the data and help us answer the questions, we used Metabase—an open source business intelligence tool—to create an Analytics Dashboard.

Project Framework

Covered in Step 1, 2, and 3

Table Lineage (via dbt)

Covered in Step 4 (Optional) - view the dbt documentation

Table Documentation (via dbt)

Covered in Step 4 (Optional) - view the dbt documentation

Metabase Dashboard

Covered in Step 3 (Optional)

Technology Used

| Database | (ETL/ELT) Pipeline | Workflow Orchestration | Analytics Platform | |—————————|—————————–|—————————-|—————————| | Postgres (using Docker) | Python 3.6 | Luigi (using Python) | Metabase (using Docker) | | | dbt (Data Transformation) | | |

Developer notes

This project was created on macOS. It has not yet been tested on Windows.

Requirements

- Python 3.6 or above

- (Optional) Use a virtual environment - see this guide

IMPORTANT- For libraries used, seerequirements.txt. Includes:

- Docker

- PostgresSQL - Database

- Metabase - Analytics Platform

- Other Software

- DBeaver - SQL Client to view the database

Data Source

We primarily use Airport and Arrival data from these two sources:

- Airport Data

- https://raw.githubusercontent.com/jpatokal/openflights/master/data/airports.dat

- Arrival Data

- https://www.flightstats.com/v2/flight-tracker/search

- Specifically, we will scrape arrival information by looping through this site for each airport

- Example link scraped: https://www.flightstats.com/v2/flight-tracker/arrivals/KUL/?year=2020&month=2&date=18&hour=6

- https://www.flightstats.com/v2/flight-tracker/search

Using this project

Step 1. Initial setup

1) Install Python 3.6 using these instructions.

(Optional) Use a Python virtual environment

2) IMPORTANT - Install required libraries, at the command line:

bash

$ pip install -r requirements.txt

This will install the Python libraries required for this project:

luigi- for (one-script) workflow orchestrationdbt- for uploading raw data and data transformation in databaserequests- to scrape websitesSQLAlchemy- for database connection in scriptspandas- for flattening of extracted (JSON) data and saving to csvPyYAML- for configuration extraction

3) Install Docker using these instructions.

4) Install PostgresSQL via Docker using the instructions below (source), at the command line: ```bash $ docker pull postgres

$ mkdir -p $HOME/docker/volumes/postgres

$ docker run --rm --name pg-docker -e POSTGRES_PASSWORD=docker -d -p 5432:5432 -v $HOME/docker/volumes/postgres:/var/lib/postgresql/data postgres

```

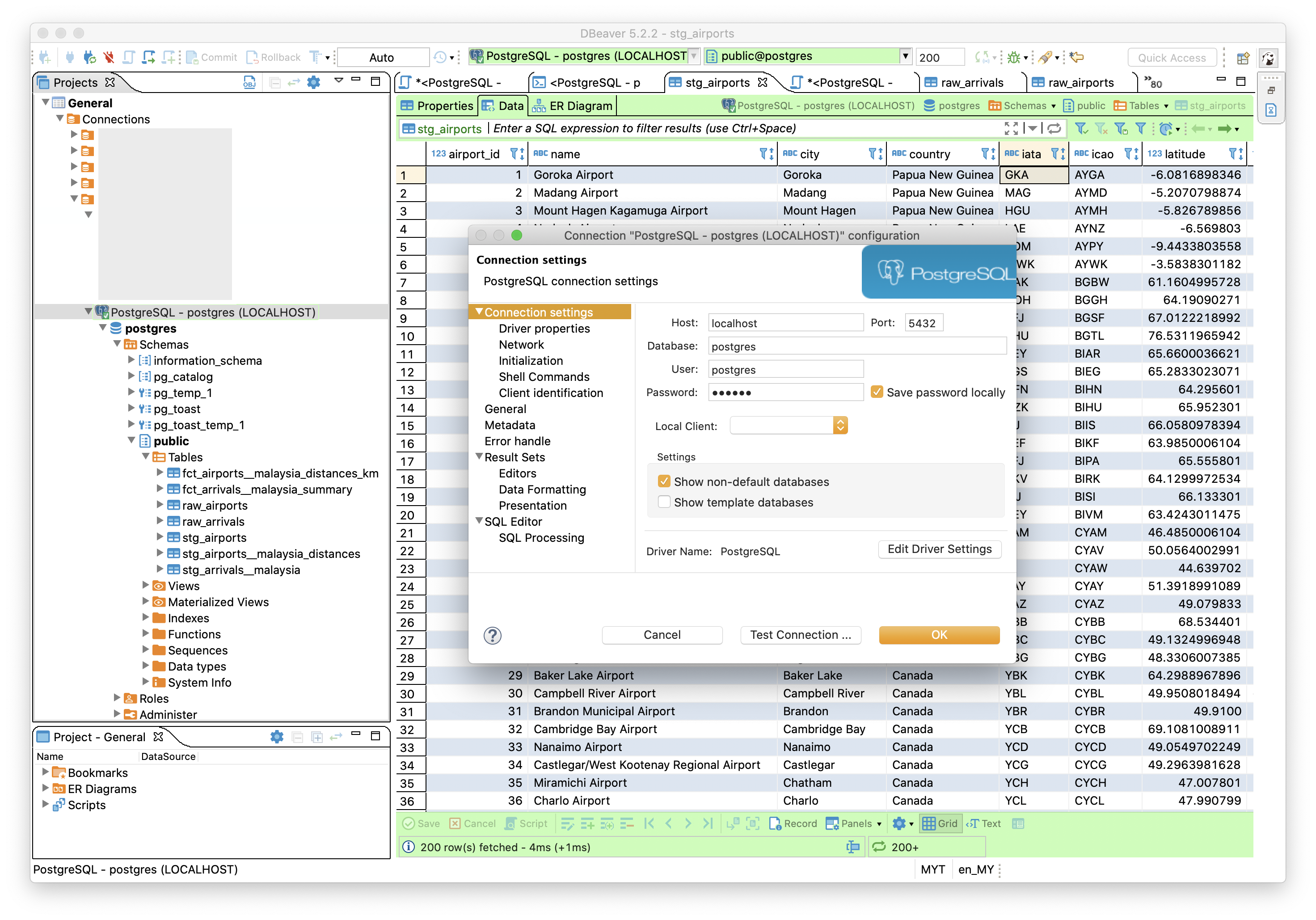

5) Install a suitable SQL Client such as DBeaver.

Use the default connection configuration to verify the database connection and view the data that will be added in the later steps:

```yaml

Host: localhost

Database: postgres

Port: 5432

User: postgres

Password: docker

```

Step 2. Running the workflow

This step utilises the Luigi workflow orchestration that automates the entire ELT pipeline.

To run each tasks in the workflow manually, see this guide. 1) Run the

workflow.pyfile which will initiate the luigi workflow orchestration, at the command line:bash $ python workflow.py --local-scheduler DbtRunAnalysisLuigi will work through all the steps as defined inworkflow.py.

> _Notes: This step may take some time since it is running the entire pipeline._

>

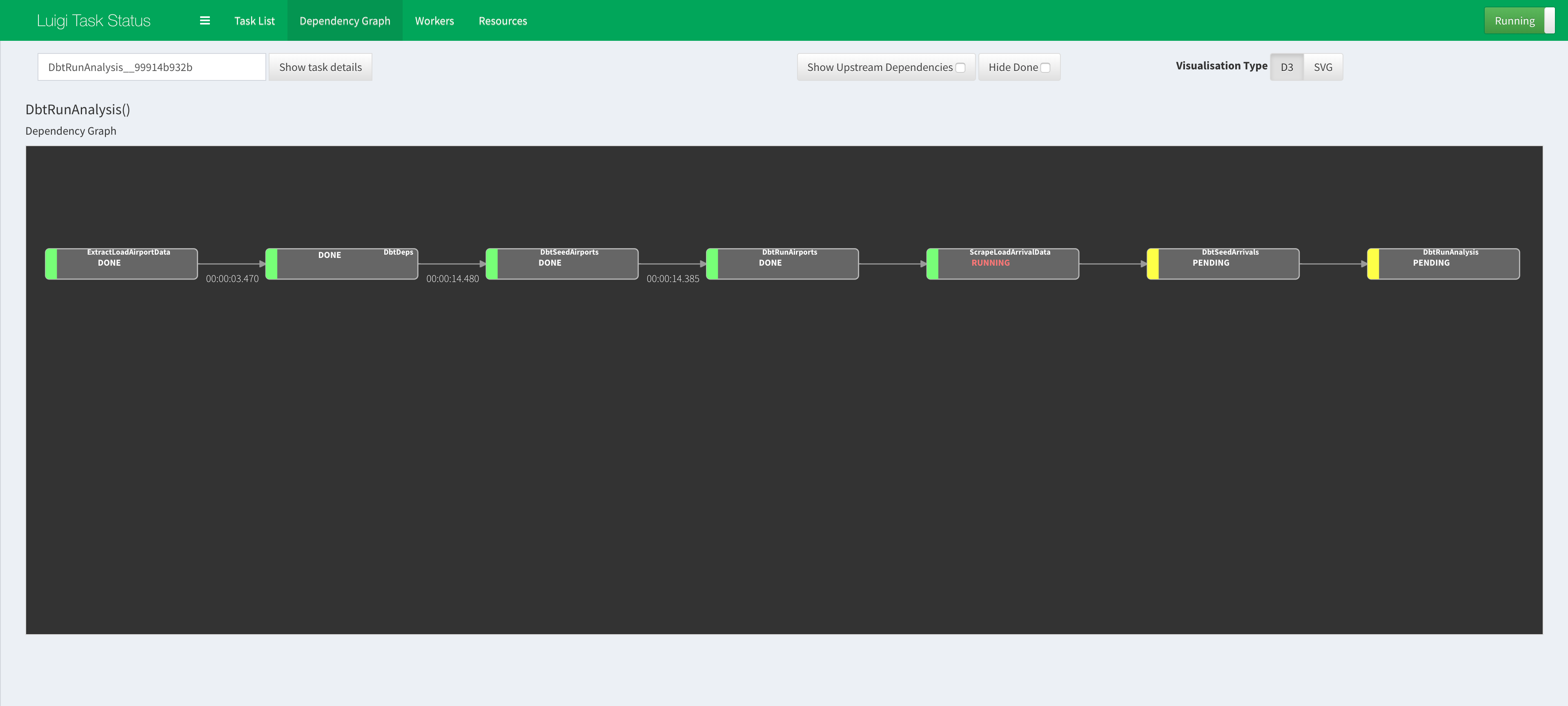

> _**(Optional)** Luigi has a web interface which can be used to monitor workflow and view dependency graphs as shown below. To replicate, follow [this guide](/simple-airports-analysis/docs/visualising-luigi.html)._

2) The following will be returned when the previous step completes successfully: ```bash INFO: ===== Luigi Execution Summary =====

Scheduled 7 tasks of which:

* 7 ran successfully:

- 1 DbtDeps()

- 1 DbtRunAirports()

- 1 DbtRunAnalysis()

- 1 DbtSeedAirports()

- 1 DbtSeedArrivals()

...

This progress looks :) because there were no failed tasks or missing dependencies

===== Luigi Execution Summary =====

```

> _Notes: You can view the 'logs' for each step in the files with `.output` extension that is generated in the root of the project directory._

3) The data should ready in the database as tables and can be viewed using the SQL Client that was installed in Step 1.5 above.

Step 3. Analysis on Metabase

Optional Step! Alternatively, the data can be viewed directly using the SQL client as mentioned in Step 1.5.

1) Install Metabase via Docker using the instructions below (source), at the command line:

bash

$ docker run -d -p 3000:3000 --name metabase metabase/metabase

Wait a little while as it takes time to initialise. You can use

docker logs -f metabaseto follow the rest of the initialization progress.

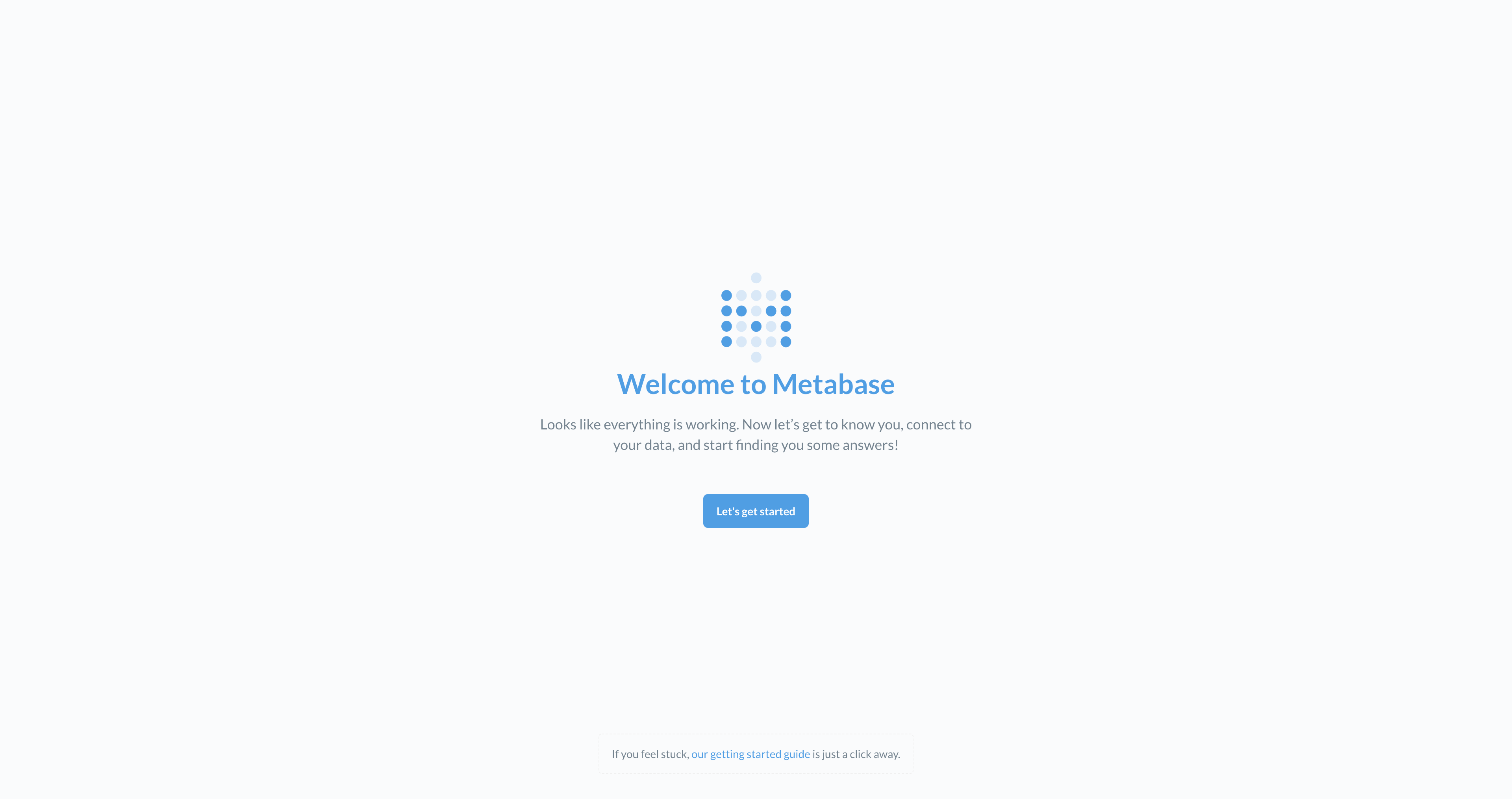

2) Once initialised, visit the local Metabase portal at http://localhost:3000/.

3) You will be directed to http://localhost:3000/setup, click on Let's get started.

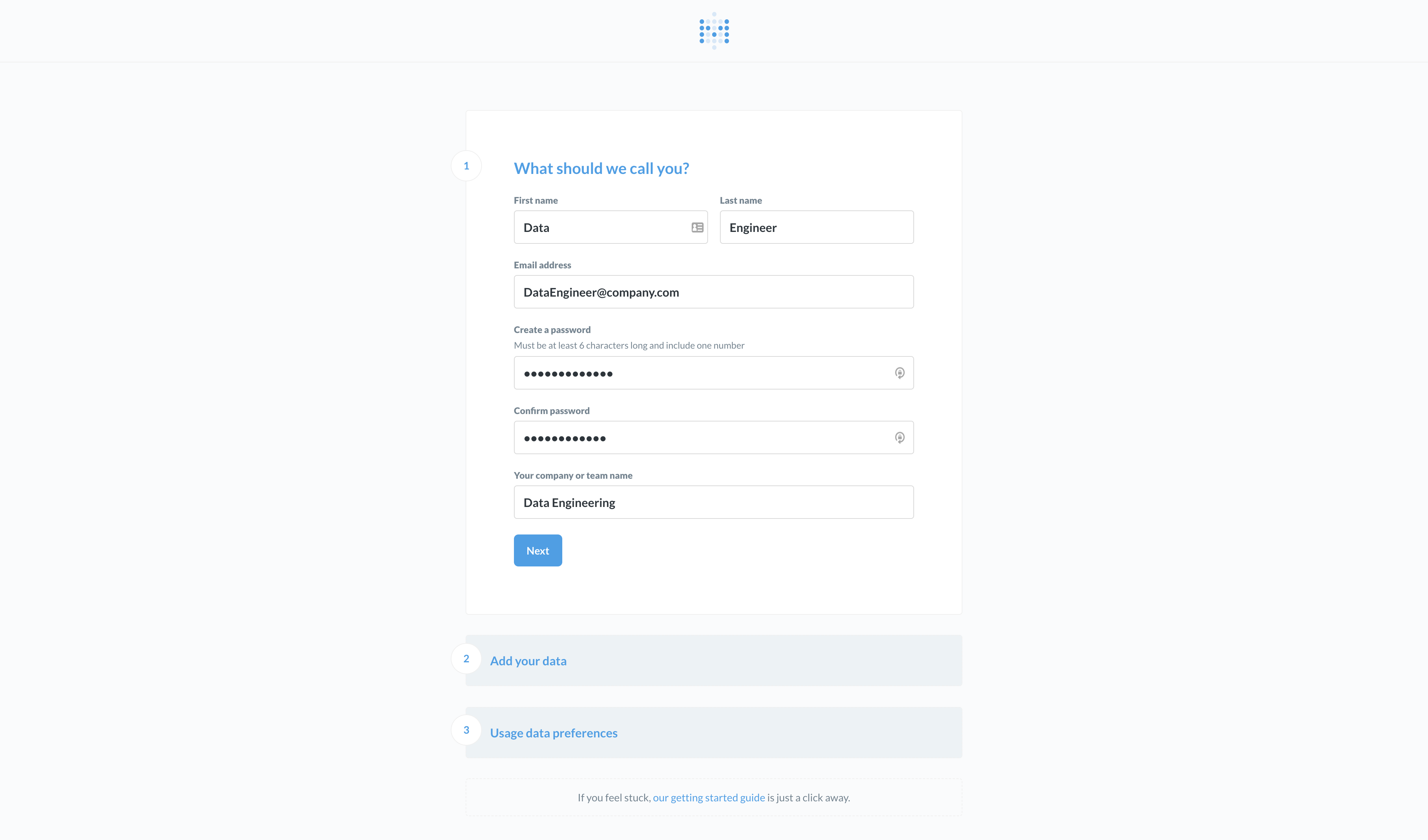

4) Fill in the details for your admin account.

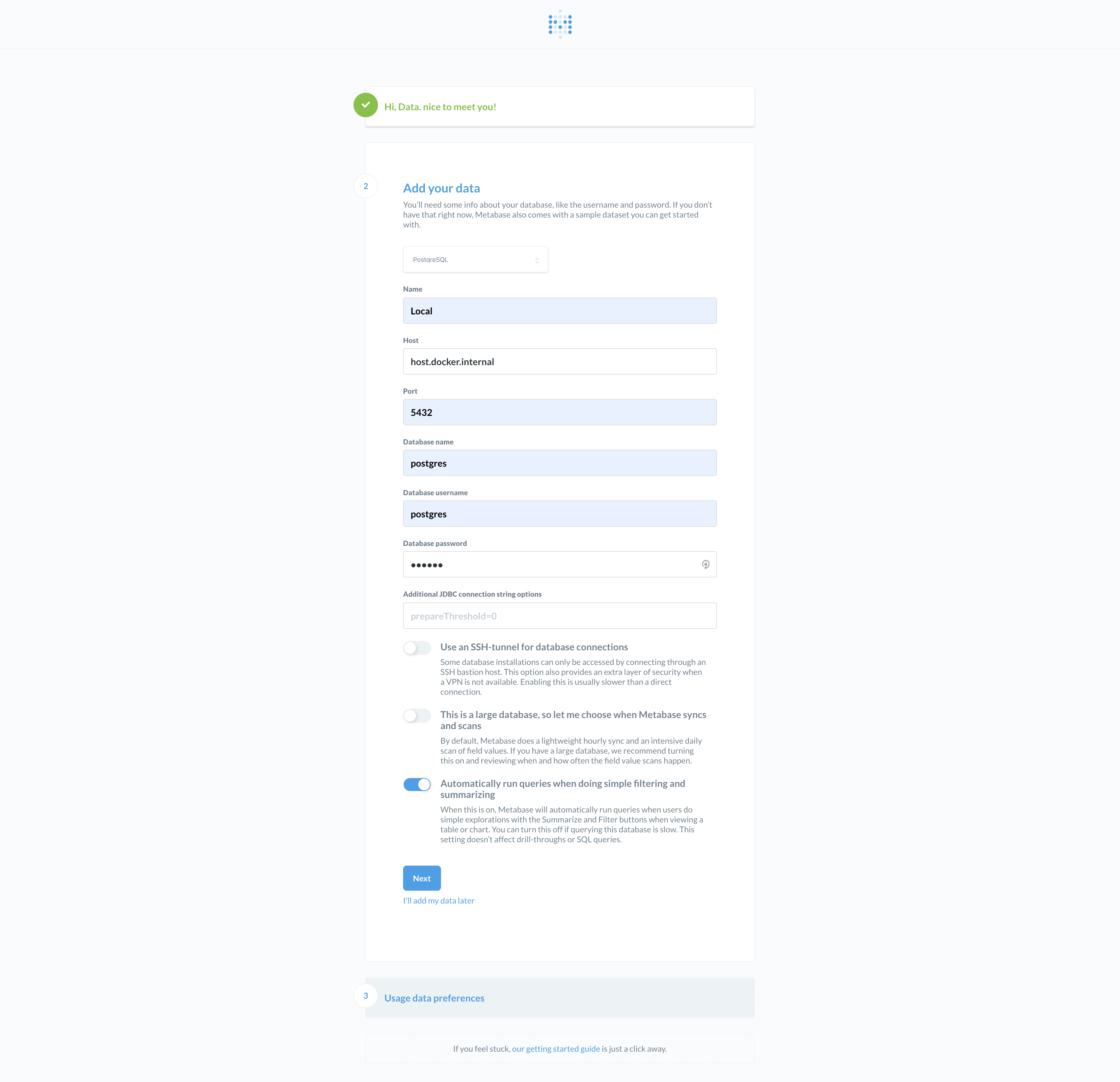

5) Important step - Use the following database connection configuration to connect Metabase to our Postgres instance in Docker:

yaml

Name: Local (Or any other name)

Host: host.docker.internal

Port: 5432

Database name: postgres

Database username: postgres

Database password: docker

> Please note that this has been tested to work on macOS. For Windows, if Metabase fails to connect to the above Host, please try referring to this official documentation on Docker Networking.

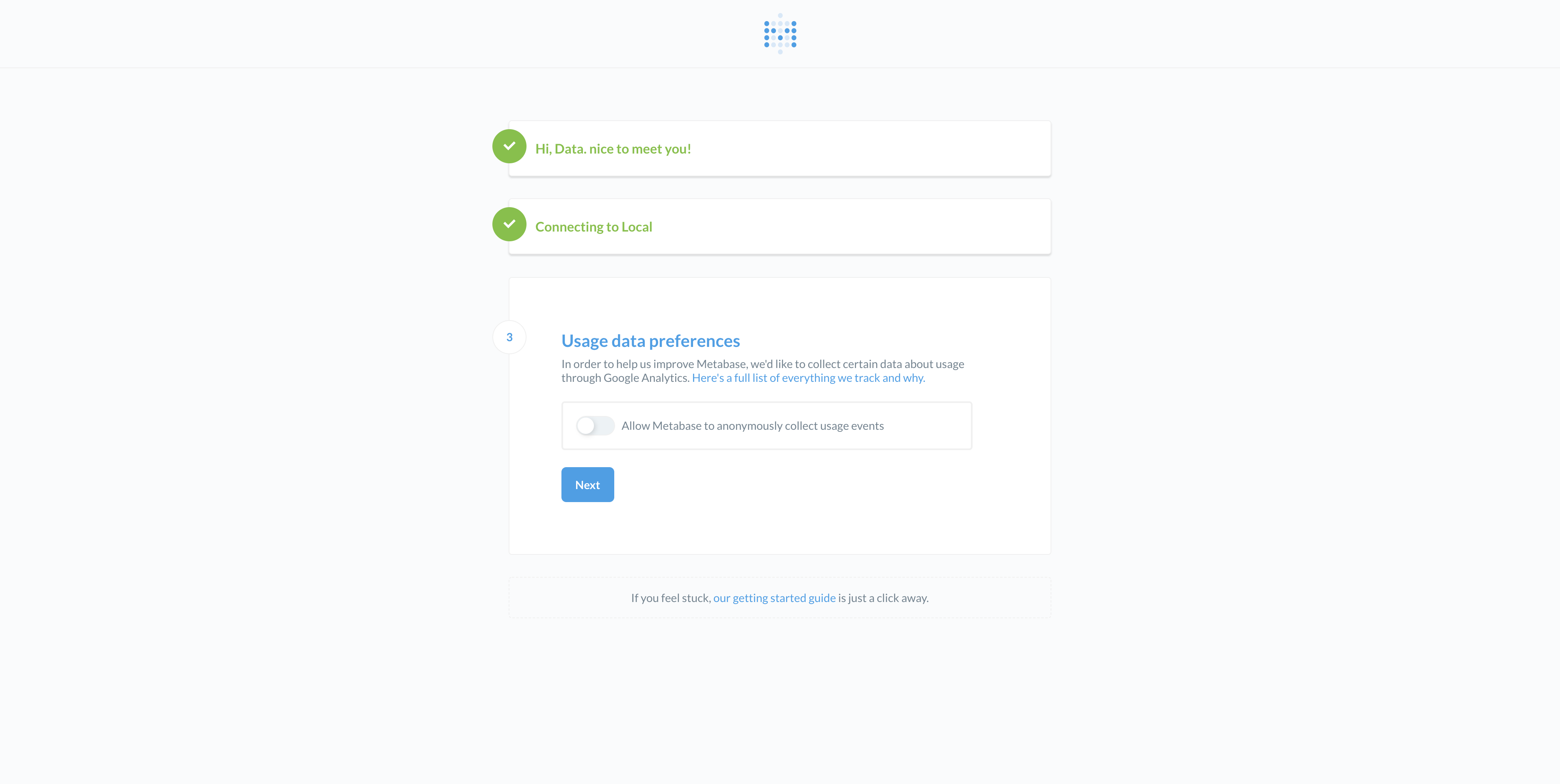

6) Choose your usage data preference.

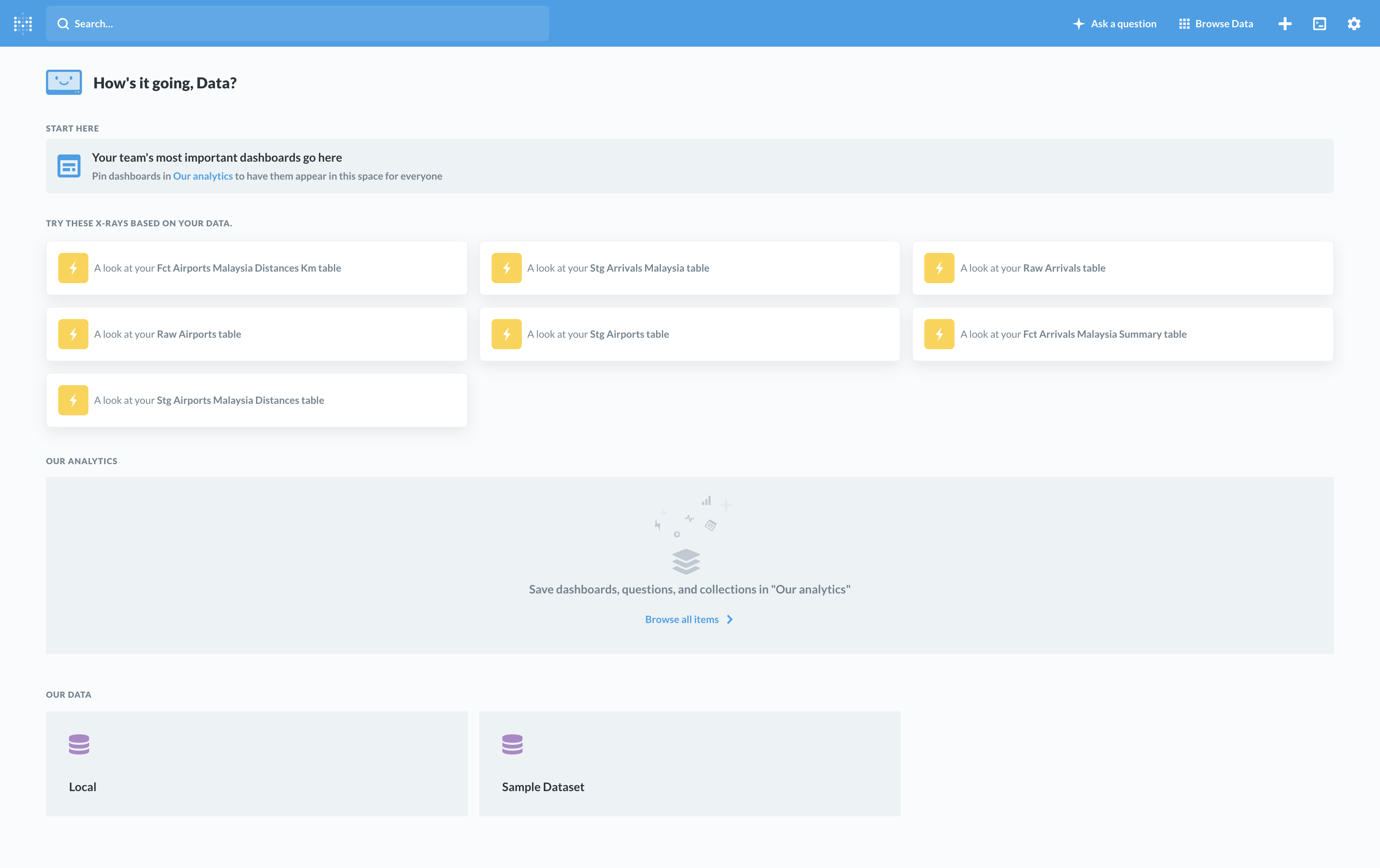

7) Done! You should see the main Metabase page below which lists the available tables in the Postgres Database that was created in Step 2.

8) Unfortunately, there is no export feature to share the dashboard and charts

seen in the Introduction. However, it is just a few simple SELECT * FROM table queries to the

main fct_ and some of the stg_ tables available in the Postgres database. :smile:

Step 4. Viewing dbt documentation

Optional Step!

1) In the ./dbt directory, run the following commands to generate the documentation:

bash

$ cd ./dbt

$ dbt docs generate --profiles-dir ./

2) Once the documentation has been generated, run the following commands to host the documentation website locally:

bash

$ dbt docs serve

3) The documentation website should automatically be launched in your browser.

If it does not, just navigate to [http://localhost:8080/](http://localhost:8080/).

4) Alternatively, you can view the version we hosted on GitHub - dbt documentation.

Step 5. Done

:shipit:

Future Features and Improvements

As this project was only done in more or less 3 days, there were some features or improvements that were not implemented.

Here are a few suggestions:

- Use multiprocessing to scrape websites - Especially for scraping arrivals data as we loop through multiple airports.

- Implement the logging module - Right now, the scripts are using simple

printstatements. However, proper logging will help with debugging. - Data documentation using dbt - Documentation is important, but it is also a time consuming task. Whenever possible, we should document as much as possible the tables that were generated. Your downstream consumers will thank you.

- Use environmental variables for sensitive information - For passwords, in particular.

- Use a more versatile Workflow Orchestration tool - Luigi is simple and easy to get started. But one should consider alternatives like Airflow which can handle complex workflow and has more features.

Author

- Lee Boon Keong - Feb, 2020